Welcome to ExtremeHW

Welcome to ExtremeHW, register to take part in our community, don't worry this is a simple FREE process that requires minimal information for you to signup.

Registered users can:

- Start new topics and reply to others.

- Show off your PC using our Rig Creator feature.

- Subscribe to topics and forums to get updates.

- Get your own profile page to customize.

- Send personal messages to other members.

- Take advantage of site exclusive features.

- Upgrade to Premium to unlock additional sites features.

-

Posts

199 -

Joined

-

Last visited

-

Days Won

2 -

Feedback

0%

Content Type

Forums

Store

Events

Gallery

Profiles

Videos

Marketplace

Tutorials

Posts posted by mouacyk

-

-

On 20/09/2022 at 12:25, UltraMega said:

RT performance getting a major boost as expected.

Just wanna be careful with this statement. DLSS 3.0 brings no advancement to ray tracing. SER brings up to 1.25x RT performance, that's about it. Other uplifts specific to RT may come from the new node and increased hardware units, but it's not going to be near the claimed 4x.

The larger portion of the 4x is coming from the new frame generation, which has been seen used with SVP since Turing and known as Optical Flow. There is no ray tracing happening in this step, because it is outside of the game engine and has only access to pixels from already rendered frames and (direction) motion vectors of those pixels. At best, it's another trick, one of several that NVidia is running out of relating to RT performance. A more note worthy trick that is directly part of the RT pipeline and needs to be in the engine, is the denoiser, which has gotten significant upgrades and was featured on two-minute papers. I appreciate RT, but NV is coming up short each gen and this time, they've came up with the wrong compromise. RT is about fidelity. Frame generation undoes ALL of that.

-

4

4

-

-

nah, they be prosuming at $3999 usd

-

1

1

-

-

Dam it, thought L was gonna drop it

-

lmaos a lot

-

1

1

-

-

best, E!

-

Water-cooled = content, even if it's like 10 years

-

1

1

-

-

5 minutes ago, The Pook said:

swap the GPU with a Thunderbolt card and run an external dGPU and a 10 GbE Thunderbolt adapter?

kinda defeats the purpose of an ITX system though

10GbE Thunderbolt adapters are expensive as f!

-

1

1

-

-

On 28/03/2022 at 12:29, Sir Beregond said:

Yeah, considering rising energy costs, it just feels kind of, I don't know...irresponsible? I'm willing to be in the minority for thinking that way in the enthusiast community as I know many won't care in the pursuit of performance. But just seems like going the wrong direction to me. You used to get more performance AND lower power draw. This feels more like hitting a wall and throwing as much power through it as possible to get the performance.

Whatever the f happened? My best guess is something like the following is happening, since graphics complexity is squared, so along with a generational 1.5x perf improvement, you have to keep increasing power draw to make up for the gap.

-

1

1

-

-

When did we surpass that other unnameable website?

-

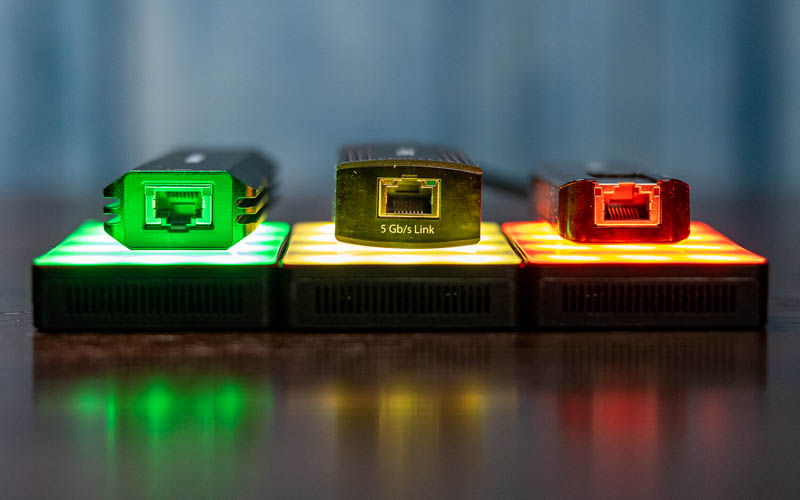

6 hours ago, ENTERPRISE said:

After taking a look at this :

USB 3.1 Gen1 to 5GbE Network Adapter Guide - ServeTheHome

WWW.SERVETHEHOME.COM

USB 3.1 Gen1 to 5GbE Network Adapter Guide - ServeTheHome

WWW.SERVETHEHOME.COM

In our Buyer's Guide, we summarize our USB 3.1 Gen1 to 5GbE network adapter reviews so our readers can make quick comparisonsLooks like the Sabrent steals the show with its performance and pricing.

Sabrent NT-SS5G Review USB to 5GbE NIC - ServeTheHome

WWW.SERVETHEHOME.COM

Sabrent NT-SS5G Review USB to 5GbE NIC - ServeTheHome

WWW.SERVETHEHOME.COM

In our Sabrent NT-SS5G review, we see how this USB 3.1 Gen1 to 5GbE adapter based on the Marvell AQC111U controller performs and comparesUnfortunately, it's physically limited to USB 3.1 and thus only performs up to 3.4Gbps instead of somewhere near 5Gbps? This is bordering on false advertisement.

Anyway, it seems most, if not all, 5GbE adapters are suffering heat issues and throttle and may even disconnect. It's unfortunate, but 2.5GbE seems like the best value and most reliable for the mean time. We really should be at 10GbE across everything by now.

-

1

1

-

-

Nah, I'd rather take cash. They are literally joining you at the hip... this way.

-

Have a feeling the tricky bit is actually turning these into usable 3D meshes that can be optimized for real-time use. Suppose, there is AI coming for that as well.

-

First, DX12 brought better parallelized code, and now it's tackling data. That's commendable, wondering where Vulkan is at.

-

On 20/03/2022 at 12:21, Avacado said:

Has to be the 9900k for me. Not because it's the most memorable from my youth, but for what it started. The 9900k is singlehandedly the reason I am wedged so far into tech. First time I ever broke 5GHz reliably. First time I ever delidded, first time I ever used a custom loop. That chip is solely responsible for my addiction in it's current state. Therefor it has to be my favorite by default.

First time I ever sanded a CPU die and cooled directly

. 5.4GHz 0AVX 5.1GHz Cache and game stable with HT-Off is pretty sweet.

. 5.4GHz 0AVX 5.1GHz Cache and game stable with HT-Off is pretty sweet.

-

1

1

-

-

X5470, makes Q6600 look like a piece of crap. It can run at 4GHz on stock voltage and overclock to 4.4GHz, while 6600 needs an extreme overclock to reach 3.6GHz.

-

1

1

-

-

3 minutes ago, J7SC_Orion said:

...once you have all your super-clean ingredients, a couple of other things and tips for your loop assembly...

1.) if you're building a loop with multiple components (such as several rads, pumps, blocks), I pre-fill each component separately, including the tubing, with liquids before closing the adjacent parts of the loop. This is makes even more sense when you have 1200++ 60 ++ loop components re. subsequent air-bubble chasing and bleeding.

2.) especially with 1.) but even w/o it, I am very mindful of touching certain parts such as fittings with my hands, or even breathing on it. No sense having all the clean / distilled / deionized products for a loop, then messing things up by breathing on an open loop, or checking fittings with your bare hands after you visited the fridge for an extra slice of cheddar and Calabrese pizza speaking from experience

I wear gloves now and hold my breath as much as possible, after seeing my last loop turn brown. Also use a leak tester to move water into place, rather than blowing on tubing.

-

1

1

-

-

Av[a]cado rolls right off the tongue. [o] doesn't quite do it, honestly.

-

2

2

-

-

Moving goal post to De-ionized water already? Isn't distilled water flushed and refilled annually or bi-annually enough? These are $1.50/gal here.

Thanks to @ArchStanton for filter idea from Midlife Crisis thread.

-

4

4

-

-

No sure if you knew this, but the memory write-speed is bugged on all straps except 16x when using using bus strap of 100Mhz. Could be attributing to instability, but definitely to performance. I was using 38x multiplier with bus strap of 124MHz to bench at 4.7GHz, because 100x47 wasn't remotely stable (odd). 124x38 was mostly stable for 24/7, but I don't use it because the temp was too high (>85C) compared to 4.5GHz (<80C).

-

1

1

-

-

wth am I a looking at? probably needs some more angles.

-

21 hours ago, pioneerisloud said:

I'm torn. On one hand, I dislike Nvidia because of their monopolistic tactics they've used. I dislike that they're ALWAYS closed source (Gsync, PhysX, etc). I dislike that they pay game developers to optimize JUST for their equipment at times. Lots of things I dislike about them. So in a way, I almost feel like like this is just absolutely hilarious. But at the same time, this is horrible for their customers, and especially for people naive enough to fall for the malware.

On the one hand, your wallet has power. On the other hand, only thieves have power. Pick.

-

Probably going to hang onto my 1680v2 and ASRock Xtreme 9 also, for novelty purposes. It serves as nice reminder that corporate planning doesn't control everything, deep contrast to these days.

-

1

1

-

-

Look at dem fins

-

1

1

-

-

The word enthusiast is unwarranted nowadays, but continues to be parroted in marketing and tech journalism, while it technically died with Maxwell. Since then, it's been mostly influenziasts. Fully locked 2080TI at $999, wtafh!

Portal RTX Available Now

in Software News

Posted

1280x480 (DLSS UltraPerf) to 3840x1440 around 90fps with 3080 10G. DLSS Perf 1920x720 doesn't cross 60fps. Looks like some big bottleneck, but GPU usage is 100% in both cases. VRAM isn't maxed out.