Welcome to ExtremeHW

Welcome to ExtremeHW, register to take part in our community, don't worry this is a simple FREE process that requires minimal information for you to signup.

Registered users can:

- Start new topics and reply to others.

- Show off your PC using our Rig Creator feature.

- Subscribe to topics and forums to get updates.

- Get your own profile page to customize.

- Send personal messages to other members.

- Take advantage of site exclusive features.

- Upgrade to Premium to unlock additional sites features.

-

Posts

637 -

Joined

-

Last visited

-

Days Won

33 -

Feedback

0%

Content Type

Forums

Store

Events

Gallery

Profiles

Videos

Marketplace

Tutorials

Posts posted by tictoc

-

-

I picked up one of these chairs on a sale at Office Depot. https://www.officedepot.com/a/products/510830/WorkPro-Quantum-9000-Series-Ergonomic-MeshMesh/

Excellent chair for the price. After 5 years of daily 6+ hour use, it is still in like new conditon, with only some small cracking of the foam/rubber armrests. Pre-covid I think I paid something like $325, but even at $450 I would still buy it agin.

-

1

1

-

-

On 16/04/2022 at 09:34, Diffident said:

Great minds think alike.

Yesterday I went to look at a 2.5RS for a parts car. No dice on the car since the tranny was shot and the motor had a bunch of top end and bottom end racket. The local MicroCenter is more than an hour from my house, so I decided stop in and browse around for a bit while I was in the area.

Yesterday I went to look at a 2.5RS for a parts car. No dice on the car since the tranny was shot and the motor had a bunch of top end and bottom end racket. The local MicroCenter is more than an hour from my house, so I decided stop in and browse around for a bit while I was in the area.

I've had the block and backplate since they were released, and I was just waiting for a reference card at something close to MSRP.

21 hours ago, Sir Beregond said:Yeah, 5900X at sub $400 is a no brainer for sure!

As for GPU's, most of them seem to be getting very close to their MSRPs now. I know the Micro Center here has had AMD cards sitting on shelves for months now.

So looking at that box, is it a reference style 6900XT?

Yep reference 6900XT, and props to PowerColor for the minimal packaging. Last thing I need is another giant GPU box.

-

2

2

-

3

3

-

-

-

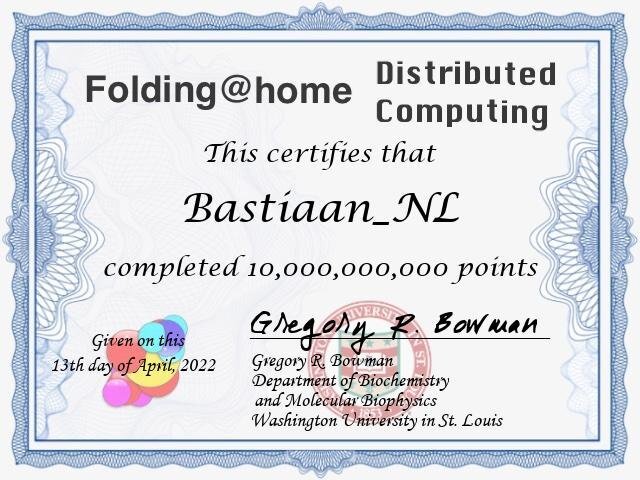

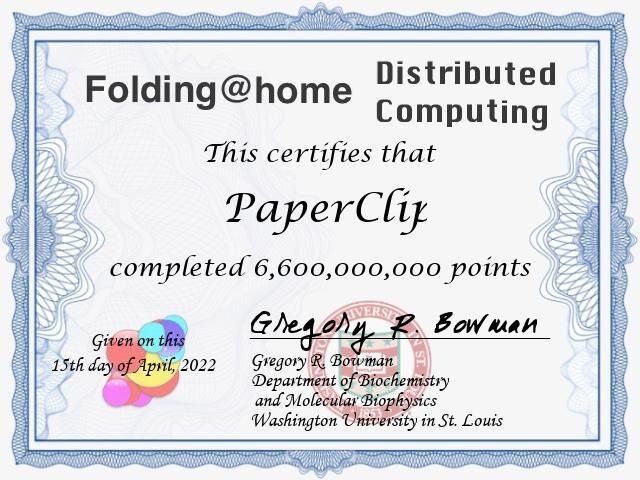

31 minutes ago, NBrock said:

I'm sitting close to 6.7 Billion. The cert only gave me the number of WUs not the points so far.

But according to stats site I have earned 6,692,785,358 points by contributing 53,747 work units.

Here's your points certificate.

To get the cert for points rather than WUs just delete the "&type=wus" off the end of the "My Award" button download link.

-

1

1

-

-

-

-

Had some hours of downtime today due to an extended power outage, but all the things are back up and folding now.

-

By default the AppData folder is hidden. Is it just the FAHClient folder that is missing, or can you not see your AppData folder at all?

You can also navigate to the AppData folder by pressing Winkey+R, and then typing %APPDATA% in the Run box.

-

On 31/03/2022 at 21:24, ArchStanton said:

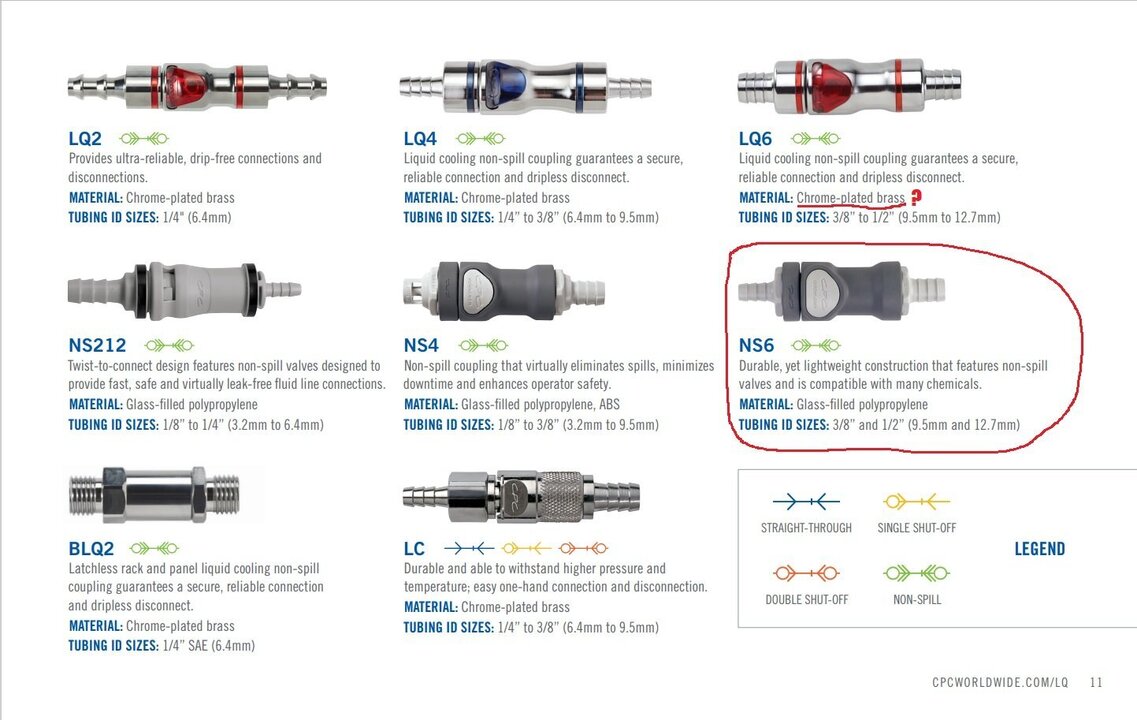

I've convinced myself that I do need to revisit my RAM and actually tune it to my system, rather than rely on the settings I plagiarized from J7. In addition, I've got some QD's coming from Koolance itself. I did some digging and found these:

But pricing and availability didn't seem better than what's available in the DIY PC enthusiast market currently, so I didn't bother researching whether chrome plated brass would function for our purposes or not (I'm guessing yes, but it would be prudent to be 100% sure).

Here are links to a brochure and the main website if anyone wishes to dig deeper:

Liquid Cooling Connectors for Thermal Management : CPC (cpcworldwide.com)

Non-Spill Liquid Cooling Connectors | CPC (cpcworldwide.com)

I think I will likely make more changes to my loop in the future. Probably going LM on both GPU die and CPU IHS. More robust cooling on GPU backplate (I do not anticipate active, just "more"). Planning to use QD's to make maintenance far easier and facilitate connection to a "chiller" (maybe on the "redneck" side of the spectrum of possibilities). I know the gains might be minimal, but for squeezing out every possible drop of cooling performance, I think I will have one of my larger radiators between the CPU and GPU blocks (primarily for the small benefit it might provide when not using a "chiller" of any sort). Aesthetics are going down the toilet, but I am okay with that now as the "benching bug" has bitten me and I am infected.

I have a bunch of the CPC QD's and they work great with not a drop spilled or any issues in the 4 years (maybe longer??) that I've been using them with the external radiators for my main workstation. There was a time when the pricing on those was really good. I think that all ended when EK started selling them stand-alone and with their Predator kits.

-

1

1

-

-

On 06/04/2022 at 08:11, Supercrumpet said:

That PC is on Windows and since folding is very much a secondary thing on that PC, I don't even know if I have HFM on it. Can you pull logs from the base F@H software?

Otherwise I'd be fine with just using averages. Sorry for putting extra work on you guys.No worries on any extra work, it's pretty much just a one liner from the log file.

The client stores all the logs locally, and you shouldn't need HFM. I haven't folded on Windows in something like 8 years, but unless something has changed, the logs should be in the FAHClient data folder at:

C:\Users\{username}\AppData\Roaming\FAHClient -

It should be pretty easy to sort out. Just need to parse the logs from the start of this month to when you removed the passkey, add up the points for the slot with your 2080ti, and then subtract it from your total. The credit estimate in the logs is usually really close to the awarded credit.

@Supercrumpet if you want to attach your logs, I can give them a little grep-fu.

-EDIT-

If you're running Linux here's an easy one liner. Just substitute in the correct slot number.

journalctl --utc --since "2022-04-03" -g 'FS00.*points' -

4 hours ago, ArchStanton said:

Anyone have time to explain the steps required to correctly (if possible) run dual PSUs in a single system (specifically a single motherboard, not one of the "2 systems in one box" builds)? Say, GPU on its own dedicated PSU and the rest of the system on the "primary" PSU. I can envision splitting the output from the "power on/reset/etc." buttons no problem, but do we run into issues with "desynchronized" signals from the two PSUs? I wouldn't think so but can't be 100% sure by force of imagination alone.

I've been using the Add2PSU boards for a long time. https://www.amazon.com/Multiple-Adapter-Connector-Genetek-Electric/dp/B0711WX9MC

Below is the small add-on-board in my workstation. The top 1000W PSU powers two GPUs and the bottom 1300W PSU powers the rest of the system. Absolutely zero issues.

-

2

2

-

-

@axipher What's the status on your 750ti?

Things have finally slowed down for me, so I'll be around the forums a bit more now.

-

Another vendor locked CPU launch.

Hopefully there is a release for consumer SKUs, so anyone that jumped on WRX80 can at least get one upgrade. Looks to be the final nail in the coffin of the HEDT platform, unless AMD pulls a rabbit out of their hat and releases some non-pro Threadripper 5xxx CPUs.

-

1

1

-

-

On 17/02/2022 at 13:31, firedfly said:

I didn't know this was a thing. Let me know how well it works. I might need to pick up one or two myself!

I diy'd one for testing before the kickstarter was funded and it worked really well.

-

-

It's just silly for Corsair to list anything other than the max flow rate. There is almost no way to estimate what the flow rate will be in an "average" loop, at least without doing some pretty involved fluid dynamic calculations. It looks like Corsair did change the spec page and now it says: "800L/h at 2.1m pressure head (1500L/H theoretical max)". The AquaComputer spec sheet is nice, since it includes some numbers for what can be expected in the real world, alongside the actual rating of the pump.

A well "calibrated" bucket and clock, with the return line running to the bucket, will give you an exact measurement.

At the end of the day, as long as water and component temps are acceptable, and the pump doesn't have an unreasonably short life, then that's all that really matters.

-

We are looking pretty good this month. No ringer cards on the team but look at all those WUs that have been done.

-

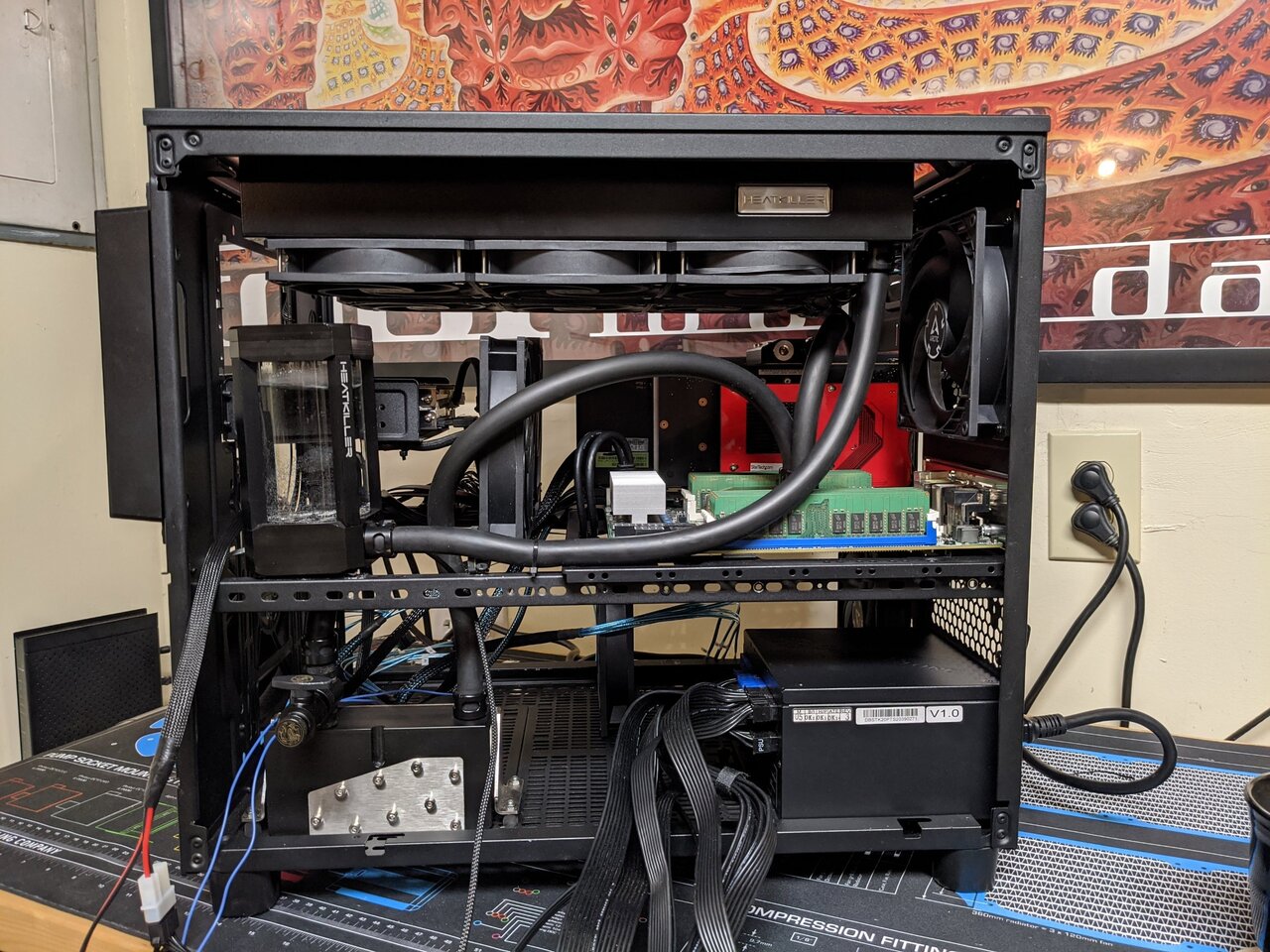

Ready to leak test. All the difficult to access wiring is done, and I'll slap the drives in after it gets a leak test and Blitz Part 2.

This case really isn't big enough for everything that is going in it, but since I've come this far, I'm just going to go ahead with it for now. I'll probably end up dropping everything in a different case in the not too distant future.

Here's a few not so great pics.

Edit: Looking at the pics, and I just noticed that one of the hdd fans is backwards.

-

I didn't get this up and runing, but I do have everything except for the wiring done. I will post some pics when I get home this evening.

Part of my goal on downsizing was to offline some data from my main file server, but I didn't get rid of as much data as I thought I would.

Final main storage pool will be an 8 drive btrfs raid10 with 4x 8TB Seagate EXOS and 4x16TB Seagate EXOS. I'll update the OP, once I finalize the rest of the storage.

-

31 minutes ago, damric said:

People running them at max overclock while in dark mode skewed the PPD?

But it's weird that there are even 2 entries for the same GPU.

There are technically two variants of the 750ti. Not much info on the one you posted, but it looks to be an OEM only card with a GK106 die vs the regular 750ti which has a GM107 die. I don't think that card ever even made it out into the wild, except as maybe an engineering sample. It is listed in the NVIDIA driver, and apparently someone must have one, since there are entries in LARS.

750ti OEM: https://www.techpowerup.com/gpu-specs/geforce-gtx-750-ti-oem.c2462

750ti: https://www.techpowerup.com/gpu-specs/geforce-gtx-750-ti.c2548

-

2

2

-

-

7 hours ago, Scc28 said:

would be my 3090 as cpu folding isnt cutting it points wise on the 5950?

Right on. The ETF handbook is here: https://forums.extremehw.net/topic/1090-extreme-team-folding-manual/#comment-21241

If all that sounds good to you and you are down to fold 24/7, just PM me the following info, and I'll get you added to the team.

EHW Name:

Folding Name:Folding Team:

Unique Passkey:

Hardware: -

52 minutes ago, damric said:

I'd hate to help you guys but you should check with @BWG and be sure that handicap multi is right on that GTX 750 Ti. It seems like it should be a lot higher, like much closer to the huge ass multi that Michele has with her GTX 750.

the PPDs are like

GeForce GTX 750 Ti Folding@Home PPD Performance - folding.lar.systems

FOLDING.LAR.SYSTEMS

GeForce GTX 750 Ti Folding@Home PPD Performance - folding.lar.systems

FOLDING.LAR.SYSTEMS

F@H GeForce GTX 750 Ti performance as of 1/25/2022. Averages across all projects PPD:81,018 - Work Units Per...and

GeForce GTX 750 Folding@Home PPD Performance - folding.lar.systems

FOLDING.LAR.SYSTEMS

GeForce GTX 750 Folding@Home PPD Performance - folding.lar.systems

FOLDING.LAR.SYSTEMS

F@H GeForce GTX 750 performance as of 1/25/2022. Averages across all projects PPD:69,207 - Work Units Per...So something looks off because your PPDs look right but your handicap multi seems too low? I didn't math it, just estimating.

41 minutes ago, Avacado said:Unless the rules have changed, going to need about 350 sample units before that 750Ti is eligible right?

I'm guessing this is a similar situation to the two Radeon VIIs that are in the database. The 750ti was/is a wildly popular card, so the one to look at is here: https://folding.lar.systems/gpu_ppd/brands/nvidia/folding_profile/gm107_geforce_gtx_750_ti_1389

-

1

1

-

-

Folding@Home, Team 239902

in Folding@Home

Posted

Since you are running it in an open top bench, the easiest thing to do would be to rig up a fan or two directly above the GPU. This will force air down between the cards and add some fresh air for the intake of the top card. I've had lots of open bench machines running all out 24/7, and increasing the airflow directly to the top GPU will probably knock the temps down 10°C or so.